Updates

Notes, experiments, and reflections from the Algorotoscope project.

Boston Massacre Models for the Semiquincentennial

I trained some new Tensorflow machine learning models on images of the Boston Massacre. Because of the way things worked int he moment, when the Boston Massacre drawing was create it was also recreated in different ways to spread the wor...

Read the update →

What Is With the Images Kin Lane Uses on His API Evangelist Website?

I love my algorotoscope work. It has grounded and guided my storytelling across Kin Lane and API Evangelist. It keeps me thinking about the system, but more ...

Read more →

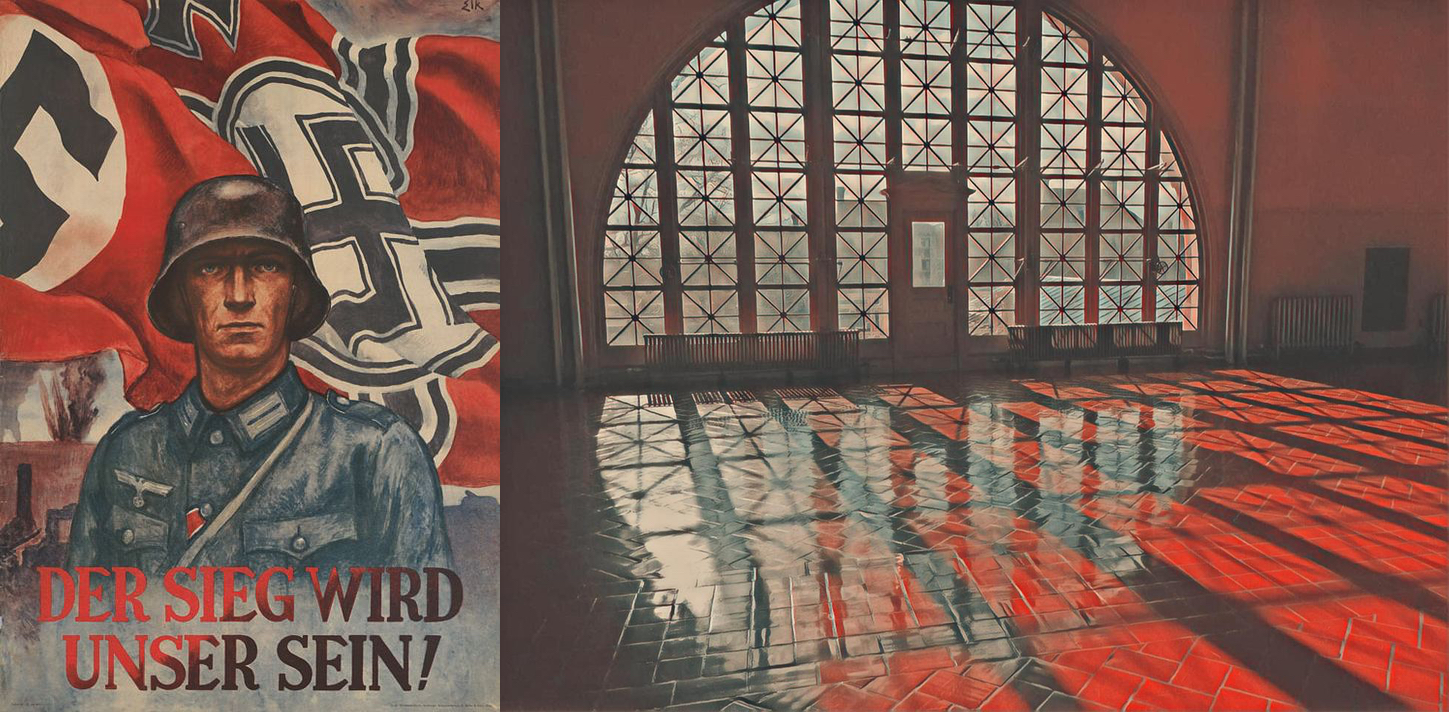

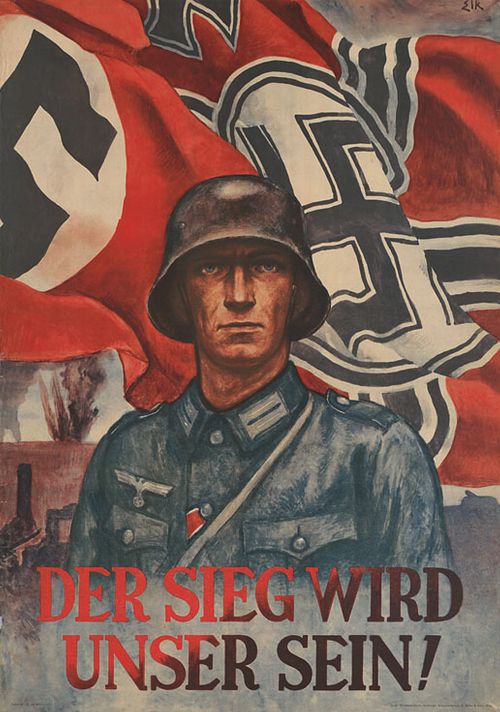

Using Francis William’s Story To Reveal Algorithmic Bias in Artificial Intelligence

A Man of Parts and Learning, by Fara Dabhoiwala on the portrait of Francis Williams is my favorite new Algorotoscope filters for revealing algorithmic bias a...

Read more →

Composite Algorotoscope Images

I spent some time making some new images with my B.F. Skinner Algorotoscope models the other weekend. I am pretty happy with my pallet of AI models right now...

Read more →

I Am Not Well

I am not well. I have something systemic wrong with me. I am not responsible for its origins, but I am responsible for perpetuating it. It is a condition tha...

Read more →

Seeing the Machine

I wish I could paint the pictures I have in my head from my work as the API Evangelist. The closest I can come is projecting light and transforming images as...

Read more →

Why I Do Algorotoscope

I started Algorotoscope as an escape from the 2016 election. I wanted to learn more about machine learning and TensorFlow while simultaneously wanting to esc...

Read more →

Rebuilding the Texture Transfer ML Process

I had an AWS machine image that had my texture transfer process all setup. I had my AWS account compromised and someone spun up servers across almost every A...

Read more →

Flipping the Polarity of the Relationship Between Models and Images

Historically the ML models I have trained for Algorotoscope have been fairly dark in nature. I had the training wheels of the models that came with the ML pr...

Read more →

Landing on a Stable Instance of Tensorflow on Amazon to Train Models

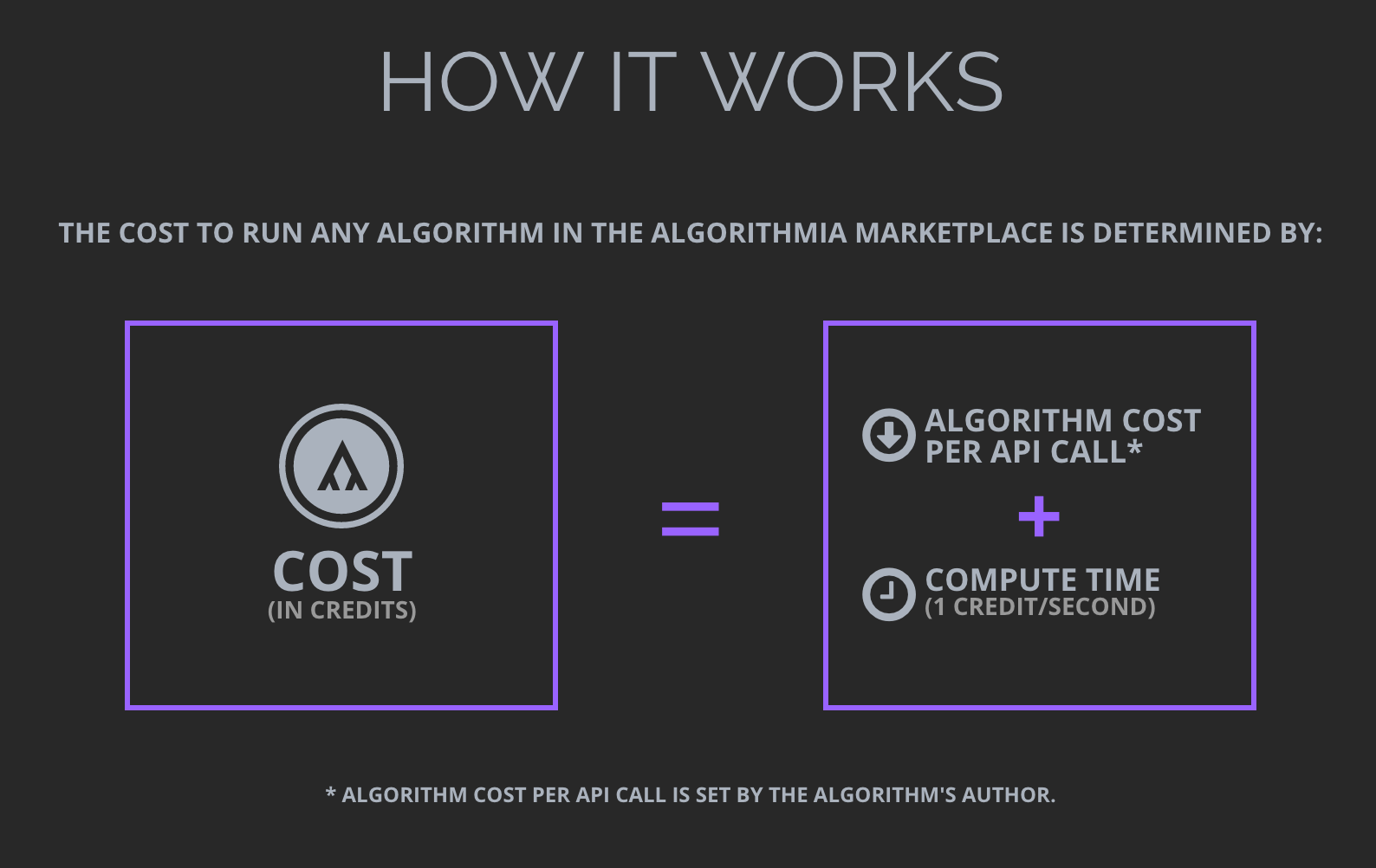

I have bounced around quite a bit from the original Tensorflow model I was using when I started this work. Algorithmia had done a lot of the heavy lifting fo...

Read more →

Adding Some Collections of My Algorotoscope Work

After organizing a couple of the latest batches of images I produced as part of my algorotoscope work I wanted to step back and see what I had made. I wanted...

Read more →

Showing What Algorithmic Influence On Markets Leaves Out

I’ve been playing with different ways of visualizing the impact that algorithms are making on our lives. How they are being used to distort the immigration d...

Read more →Highlighting Algorithmic Transparency Using My Algorotoscope Work

I started doing my algorotoscope work to better understand machine learning. I needed a hands-on project that would allow me to play with two overlapping sli...

Read more →

Why Would People Want Fine Art Trained Machine Learning Models

I'm spending time on my algorithmic rotoscope work, and thinking about how the machine learning style textures I've been marking can be put to use. I'm tryin...

Read more →

Machine Learning Style Transfer For Museums, Libraries, and Collections

I putting some thought into some next steps for my algorithmic rotoscope work, which is about the training and applying of image style transfer machine lear...

Read more →

The Russian Propaganda Distortion Field Around The White House

I am having a difficult time reconciling what is going on with the White House right now. The distortion field around the administration right now feels lik...

Read more →

Algorithmic Reflections On The Immigration Debate

We are increasingly looking through an algorithmic lens when it comes to politics in our everyday lives. I spend a significant portion of my days trying to ...

Read more →

When The Companies Who Have All Your Digital Bits Promise Not To Recreate You

I'm thinking about my digital bits a lot lately. Thinking about the digital bits that I create, the bits I generate automatically, the bits I own, the bits I...

Read more →

Algorithmia's Multi-Platform Data Storage Solution For Machine Learning Workflows

I've been working with Algorithmia to manage a large number of images as part of my algorithmic rotoscope side project, and they have a really nice omni...

Read more →Finding The Right Dystopian Filter To Represent The World Unfolding Around Us

I got sucked into a project over the holidays, partly because it was an interesting technical challenge, but mostly because it provided me with a creative di...

Read more →

Exploring The Economics of Wholesale and Retail Algorithmic APIs

I got sucked into a month long project applying machine learning filters to video over the holidays. The project began with me doing the research on the eco...

Read more →

Learning About Machine Learning APIs With My Algorithmic Rotoscope Work

I was playing around with Algorithmia for a story about their business model back in December, when I got sucked into playing with their DeepFilter service,...

Read more →Make It An API Driven Publishing Solution

Once I had established a sort of proof of concept for my algorithmic rotoscope process, and was able to manually execute each step of the process from separa...

Read more →Unique Algorithmic Filters Is Where It Is At

I am having a blast with the image texture filters that Algorithmia includes as part of their DeepLearning service. I've been playing with how each filter wi...

Read more →Opportunity In Breaking Up Videos Into Separate Images

I have been working my way through about 300 GB of drone and GoPro videos from this summer. One of the lingering thoughts I was having throughout this proces...

Read more →

Algorithmic Rotoscope

I had come across Texture Networks: Feed-forward Synthesis of Textures and Stylized Image from Cornell University a while back in my regular monitoring of th...

Read more →